We Simulated 10,000 Scrabble Games to Make Ours Fair

How one data structure powered our game engine, hint system, and balance simulator... and how one diagonal tweak fixed a 15-point first-mover advantage.

At Candle, we’re building an app to bring couples together. Most of the app is about conversation, connection, and shared experiences, but we also wanted lightweight games that feel playful enough to use during a busy day and meaningful enough to belong inside a product for those in relationships.

So we built Love Letters, a turn-based word game for couples inspired by the crossword-tile genre.

The surprising part was not building it quickly. It was discovering that our first viable version gave the player who moved first a huge advantage.

This post is about how we built the game in three days, how we made it feel distinct inside Candle, and how we used simulation to search the design space until we found a board that felt fair enough to ship.

The goal

We wanted to build a game with a few clear properties:

familiar enough that players instantly understand it

quick enough to fit into real life

asynchronous, so couples can play over hours or days

balanced enough that it feels fair

distinct enough to feel like a Candle game, not just a reskin

That last point matters a lot. In an established genre, the challenge is not inventing everything from scratch. It’s deciding which parts are crucial, which parts are flexible, and which changes actually improve the product.

Making it feel like Candle

Love Letters lives inside Candle, so it had to feel like a it was a natural themed addition to the app, not a generic competitive word game.

That pushed us toward a few decisions early:

two players only

asynchronous matches

a smaller board than classic crossword-tile games

scoring that rewards interacting with your partner’s plays

a warmer, more playful visual style than a standard board-game UI

The main mechanic we added was a Connection Bonus: when you build on tiles your partner already played, you get extra points. That nudges the game away from parallel solo play and toward actual interaction.

We also added Spark tiles as wildcards, a Flame bonus for using all 7 tiles in a turn, and a center heart / Flame tile to make the opening feel special.

Breaking it down

We decomposed the project into a few systems:

game rules and board state

move validation

word generation, hinting, and simulation

UI and drag-and-drop interactions

online turn state and persistence

balance simulation

The most important engineering decision was to centralize the game rules instead of burying them in the UI.

We built shared helpers for board sizing, rack sizing, bonus layout, tile values and distribution, placement validation, word detection, and turn scoring. That gave us one rules engine we could reuse in three places:

the live game

move generation and hinting

simulation for balancing

A GAD-what?

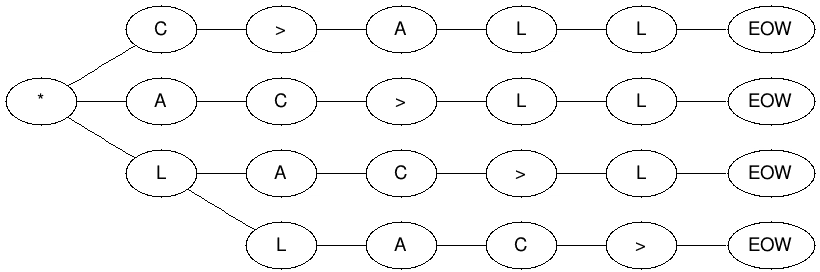

For move generation, we used a GADDAG, a word-graph data structure described by Steven Gordon in 1994. At a high level, a normal trie or directed acyclic word graph (DAWG) is great if you only care about building words from left to right. Scrabble-style games are harder, because you usually build through an existing tile on the board, not just from the beginning of a word. Gordon’s key idea was to encode words in a form that lets you grow outward from an anchor square in both directions, which avoids a lot of wasted prefix search and makes move generation much faster. In Gordon’s paper, the tradeoff is explicit: a GADDAG uses substantially more memory than a DAWG, but generates moves more than twice as fast on average.

That was a great fit for Love Letters. We used the same GADDAG-based engine for legal move generation, validation, simulations, and eventually hints. In practice, that meant one algorithm could answer four different product questions:

is this move legal?

what words did this placement form?

what are all legal moves from this board state?

what should the simulator do from here?

Gordon’s algorithm is built around anchor squares - playable squares adjacent to existing tiles - and a bidirectional search that extends away from those anchors while respecting board constraints. That is what made it useful for both real gameplay and large-scale simulations: the simulator was not inventing approximate moves or using a toy ruleset; it enumerated real legal moves under the same board, rack, dictionary, and bonus constraints as the actual game, then applied a separate turn policy to decide whether to play one of them or swap.

The first thing we tuned was pacing

We wanted the game to feel meaningfully shorter than a traditional crossword-board game while still leaving enough room for strategy. Our first viable ruleset looked like this:

11x11 board

7-tile rack

88 total tiles

+40 Flame bonus

+2 Connection Bonus per letter, capped at +10

swaps allowed only if the bag still has at least 7 tiles

That hit the pacing target almost immediately. In our base Monte Carlo simulation, median game length landed at 38 turns, which was right where we wanted it.

Then we looked at fairness.

The real problem: first-player advantage

Our base simulation looked good on pace, but bad on balance:

first-player win rate: 64.6%

second-player win rate: 34.7%

tie rate: 0.7%

That didn’t look too fair to us.

If a game feels systematically biased toward whoever starts, users would start to notice over time. Even when they cannot explain why, it makes the experience feel unfair.

The search: scoring tweaks, nearby boards, and repeated sims

Once we knew the opening was too strong, we started exploring nearby configurations: remove the center opening bonus, shift the bonus geometry around the middle of the board, test board layouts that were only small edits away from each other, and rerun the same simulations over and over.

The goal wasn’t to invent a new board configuration from scratch. It was to walk through a neighborhood of plausible boards and see which small structural changes pushed the game toward 50/50 without breaking the pacing we wanted.

The first obvious fix was to remove the extra center opening bonus. That helped immediately:

first-player win rate fell from 64.6% to 54.0%

second-player win rate rose from 34.7% to 45.4%

median turns stayed at 38

That was a useful result. It told us the center structure was integral in determining the outcome of the game.

Stronger play made the imbalance clearer

So we tested multiple turn policies, not just multiple move selectors. For playable turns, we sampled from the top slice of legal moves or chose the optimal move, depending on the policy. We also modeled the decision to swap probabilistically when the available move set looked weak. In other words, our simulator didn’t assume that a player always placed a word just because it existed. Normal users don’t always play optimally (unless they were spelling bee champs growing up)!

To address this, we sampled as follows:

sample from the top 50% of legal moves, with probabilistic swapping

sample from the top 10% of legal moves, with probabilistic swapping

always play the optimal move

The swap model depended on two signals: how many legal moves were available, and how strong the best move looked. If there were very few options, or the best available score was weak, the simulated player became more likely to swap instead of forcing a low-value play. That gave us a more believable “average-ish player” than a policy that always plays any legal move it can find.

Even after removing the center opening bonus, first-player advantage persisted across all of them:

average-ish play: 54.0%

top 10%: 56.5%

optimal: 58.4%

That told us that the “first-mover advantage” was structural and not just noise from weaker move selection.

We also tried explicit score compensation

Before landing on the final board shape, we also tested whether a simple asymmetric points rule could offset the opening edge.

We simulated second-player starting bonuses from +1 through +10 and reran each configuration at scale. The best result in that sweep was +6 points, which came out closest to fair over 5,000 simulated games.

That was a useful benchmark. It told us roughly how much opening advantage we were trying to neutralize.

But we still didn’t love it as a product decision.

A visible “second player gets extra points” rule felt like a bandaid solution, and it would be hard to explain to the players. It fixed the math, but not in a way that felt elegant or intuitive. We didn’t want the players thinking, “wait, why did my partner randomly get a point bonus for going second”?

The board search that got us there

As we kept searching nearby board layouts, we found that changing the geometry around center produced almost the same balancing effect as the +6 compensation rule for the 2nd player, without introducing a special-case rule players had to learn.

Originally, the center area had a plus-shaped arrangement of adjacent bonus squares around the middle. That structure naturally favored the opening play, because the first player could route through the center corridor and exploit those orthogonal bonuses immediately.

We tested multiple center variants before landing on the one we liked best: an x-shaped pattern around the center instead of a plus-shaped one.

The intuition was simple: with the original plus shape, the opening move can flow through the center and grab adjacent bonuses naturally. With the diagonal x shape, the first move still crosses center, but those surrounding bonus paths are less immediately exploitable.

It was a small implementation change, but a large balance change.

Under average-ish play, this got us to the first configuration that felt genuinely shippable:

first-player win rate: 50.1%

second-player win rate: 49.3%

tie rate: 0.58%

median turns: 38

Under stronger play, a mild first-player edge still remained:

first-player win rate: 53.1%

second-player win rate: 46.4%

That was acceptable for our goal. We were not trying to erase every opening advantage under highly optimized play. We wanted ordinary players to feel like the game was fair, without introducing weird special-case rules.

In other words: the x-shaped center gave us almost the same effect as the best score-compensation rule, but in a way that felt like board design instead of bookkeeping.

Why we trusted the simulations

Simulation work is easy to do badly, so a few implementation details mattered:

the simulator was written in Node/TypeScript

it reused the same core rules and dictionary tooling as production

every simulated move came from the same GADDAG-based legal move generator as the real game

when a player had no legal moves, the simulator followed the real rules: swap if the bag had at least 7 tiles, otherwise pass

when a player did have legal moves, we still modeled swap behavior probabilistically instead of assuming they always played a move

that swap probability depended on both move count and move quality: fewer available moves and lower-scoring options made swaps more likely

we tested multiple move-selection policies instead of assuming one player model

we searched across multiple nearby board configurations rather than overfitting to one hand-picked change

That mattered because creating a balanced game is a multi-dimensional effort. A change that improves win rate but breaks pacing or board texture is not really a win.

What we shipped

The version we felt best about had a few defining properties:

familiar word-game structure

11x11 board for tighter pacing

7-tile rack

shared dictionary and legal move engine

board-centric balancing, not explicit player handicaps

connection-oriented scoring so interacting with your partner is rewarded

The game is still recognizably in the Words With Friends / Scrabble family, but it is optimized for a different use case: a fast, asynchronous, relationship-native game inside Candle.

What we learned

A few takeaways stood out:

Small board changes can matter more than big scoring changes. The final board adjustment gave us nearly the same effect as a +6 second-player compensation rule, but in a much cleaner way.

Search beats guesswork. The best result did not come from one clever idea. It came from exploring nearby boards and nearby scoring rules until the pattern became obvious.

Reusable rules logic is the real accelerator. The only reason we could move this fast was because one rules engine powered UI, validation, hinting, and simulation.

“Feels fair” is a product requirement. For an app designed for pairs of two, fairness mattered more than squeezing every ounce of competitive depth from the rules.

Average users were the target. We still cared about stronger players, but the product goal was not tournament balance. It was a game couples would actually enjoy playing.

Final thought

Building a word game in three days sounds fast, but the speed did not come from cutting corners. It came from a few early decisions:

centralize the rules

use one fast move-generation engine everywhere

simulate with real legal moves

optimize for product feel, not theoretical purity

treat balance like an engineering problem, not just a vibes problem

That let us go from idea to playable to balanced quickly enough to ship, without guessing our way through it.

And that was probably the bigger lesson: if you are building a game inside a product, you do not need infinite time. You need the right abstractions, a tight feedback loop, and the ability to use data to your advantage.